Written by: Sangeeta Vishwanath,

Head of Digital at Mantel

Breaking news: OpenAI just hired Peter Steinberger, the founder of OpenClaw, to drive the next generation of personal agents.

OpenClaw, also known as ClawdBot and Moltbot, is the viral, open-source ambient agent platform that pulled 2 million visitors in a single week and has over 200,000 GitHub stars. The project is now moving into a foundation structure, with continued support from OpenAI.

At the same time, China’s industry ministry has issued a warning that OpenClaw poses significant security risks when configured improperly, exposing users to cyberattacks and data breaches – the most high profile of a range of voices expressing concern about the security implications of OpenClaw.

One side is betting big on ambient agents. The other is sounding an alarm. Both are, however, telling you the same thing: that this is a material shift in the digital landscape, and that you better be paying attention.

We’ve been right in the middle of this shift at Mantel, helping delivery teams trial and adopt tools like Gemini Code Assist, Claude Code, Amazon Kiro and Github Copilot, and watching them evolve from “this is a helpful coding tool” to “this can plan work and run our development workflows.”

In my opinion, this OpenAI move confirms something I’ve long felt. Soon, the difference between platforms won’t be model capability: It will be trust architecture.

I am not speaking about trust as a vague concept, but as a designed and enforceable set of boundaries that define what the agent can do, when it can do it, when it must seek our intervention, and how we can audit it.

The world has moved quickly

Less than 12 months ago, during the Copilot era, most AI conversations primarily centered around autocomplete functionality. The beginning of the shift to the Agent era marked a fundamental change: models like Claude and newer versions of ChatGPT began to function less as autocomplete tools and more as junior teammates capable of planning tasks, executing them, and revising their work based on feedback. This evolution has been vastly enhanced through better scaffolding techniques, including agent skills and specialised sub-agents that handle discrete tasks before returning results to the primary agent.

This era isn’t necessarily over, but I believe it is no longer the frontier.

What sets OpenClaw apart is that it doesn’t wait for a prompt. It stays on top of your emails, deals with insurers, scans for rental properties that match your needs, checks in for flights and handles whatever else you point it at. It observes, anticipates and acts. The platform’s rapid rise to viral status led to its creator joining OpenAI, where he’s now building the next generation of personal agents.

This shift has captured widespread attention because it triggers a different kind of unease: one that autocomplete never really provoked.

The strong opinions

Supporters of OpenClaw love it because it feels like working with a colleague who’s already thinking ahead. You’re in a repo and it’s telling you that the build has failed and that it has created a PR with the fix, waiting to be reviewed.

Detractors point to the obvious things: a weak security posture, vulnerabilities, agents going rogue, data leakage and (inevitably) a little bit of “the agents are plotting the end of humanity.”

It has been an amusing year and it’s only February.

My feeling is that both sides are right about different things. The capability is real but so are the risks. If you see only one side, you are going to miss what’s actually happening. We’re transitioning from AI as a tool that we use, to an entity that we delegate to. Hence, why I bring up trust.

“We don't leave human teammates ungoverned. We build role-based access controls, segregation of duties, approval gates and audit trails. This is not because we assume bad intent, but because we plan for the damage a bad actor could do. Agents require the same controls.”

Sangeeta VishwanathHead of Digital, Mantel

From tool to digital colleague

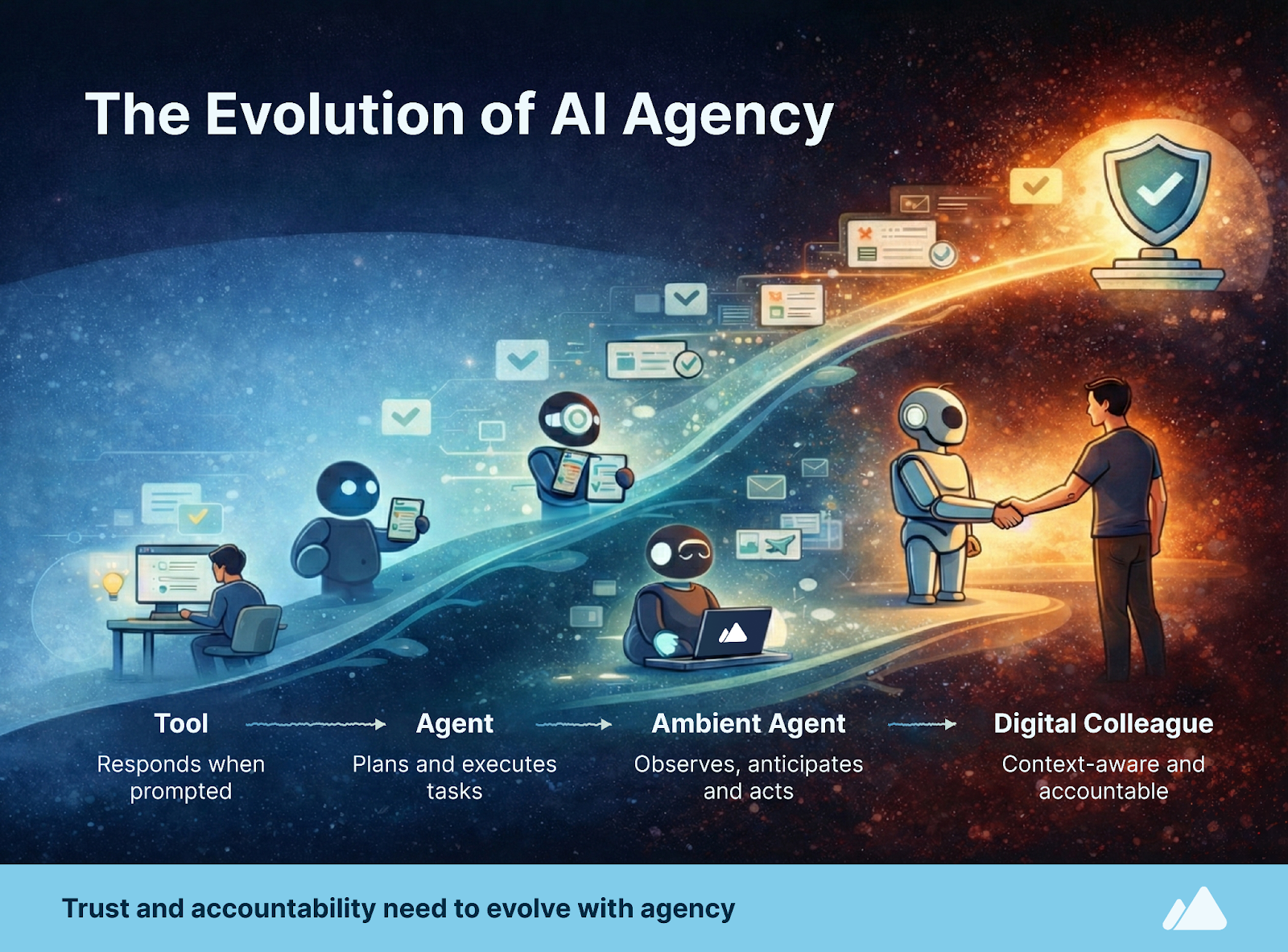

Here is an AI maturity curve that we’ve been using for over a year in our client conversations.

| Phase | Behaviour | Relationship |

| Tool | Responds when prompted | User operates the tool |

| Agent | Plans and executes tasks | User delegates to the agent |

| Ambient agent | Observes, anticipates, acts | Agent works alongside the user |

| Digital colleague | Context-aware, accountable, bounded | Agent and user share responsibility |

The Digital Colleague framing forces a question that tools can ignore. If this thing is going to act like a teammate, what qualities do we require before we let it sit in the team, with our people? After all, no one wants to work with a colleague who publishes a hit-piece on us because we declined their pull request.

We need the agent to develop, and the underlying platform to enforce, a few kinds of awareness:

- Role awareness and accountability. A real teammate understands shared goals and priorities. They know what good looks like for this team. They can explain what they did and why and can be held accountable.

- Risk awareness. The best engineers aren’t just good at building things, they’re also good at anticipating what could go wrong. They know which changes are reversible and which are not. Agents also need operational risk awareness. They need to know when a human has to be in the loop before touching production or changing critical modules such as auth.

- Authority awareness. A teammate knows what they’re allowed to do and what needs approval. They understand boundaries and escalation paths. Agents need explicit, enforced, comprehensible permission boundaries.

We have seen some patterns emerge in this direction. Just-in-time privileges allow us to apply least privilege, dynamically. If an agent needs to open a PR, let it open but not merge. If the agent needs to make a purchase, give it a scoped token that expires after a single use.

Trust is undoubtedly the differentiator

A year ago, capability gaps between tools were wide enough that you could pick winners just by model performance. Now, however, the gaps are closing, and models and feature sets are converging.

Ambient agency is what sets OpenClaw apart right now, but I don’t think that advantage stays exclusive for long. Other platforms will adopt the pattern because it’s so powerful, and because users will demand it once they’ve felt it. What they will also demand for, is to have a platform they can trust.

My instinct is that critical mass adoption now depends on trust architecture. Trust is deeply personal and depends on your risk tolerance, the type of work you’re doing, the client context and frankly your temperament. If we want agents to move from novelty to mundane – and mundane is good because it is not risky or scary, but dependable in its routine and dullness – we need the ability to govern when, where, and how an agent is allowed to act on our behalf.

I have lived this debate once already, in a different form almost two decades ago.

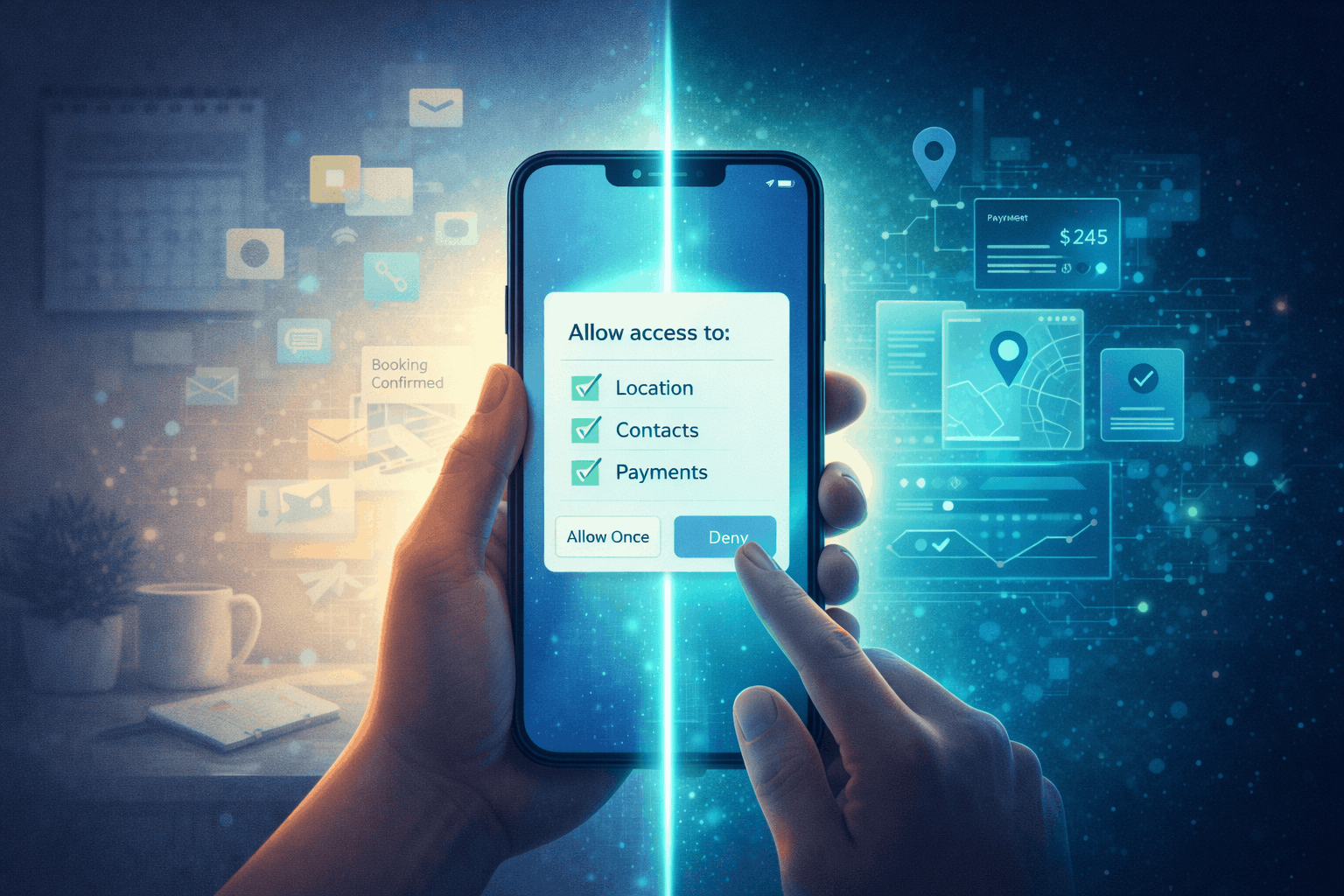

In the early days of smartphones, when apps started asking for access to everything, we didn’t have a mental model for what was happening – we just had a gut feeling that said, this feels risky. I better pay attention.

The tech giants we know today took different philosophical paths.

Apple (iOS) went hard on rigid, enforced permission structures. The system is opinionated, harder to circumvent and there are real consequences for violations. They implemented security by constraint.

Google (Android) implemented security by choice and historically leaned more flexible and more configurable. Over time Google tightened up its stance, but its ethos has always been to give users control.

The two models served different trust preferences and created huge, world-altering adoption.

Map this model to ambient agents. An ambient agent knows your context and can take actions (edit files, run commands, open PRs, message people, manage priorities, buy things). This is a permission surface area.

If the permission model is sloppy, cautious users never let the agent have control, while busy users click “Allow” on everything until something goes wrong. Neither outcome is desirable for a platform owner. This is where the two OpenClaw camps are currently at.

What we need is a permission system that can span both ends:

- A security by constraint model that is rigid and makes it hard to shoot yourself in the foot for teams who want strict boundaries.

- A security by choice model that lets you grant and revoke task scoped access and has power user workflows for teams who want flexibility.

The mobile permission model made sense for laypeople and power users alike. Agents will need the same practical situational controls:

- “Allow me to deploy build #514 to staging?”

- “Allow me to access customer logs for the next 15 minutes to investigate this incident?”

- “Allow me to use your credit card once to book your shortlisted flights to Vietnam?”

The question is no longer if the agent is capable of doing a task. The question is do I trust it right now, with this data, to take this action?”

And that’s what I mean by trust architecture.

What does this mean for adoption?

Clear, enforceable, user-governed trust models for agents will enable adoption, not because they’re exciting, but because they are the bedrock of the agent that people and organisations can safely integrate into real work.

If you’re a leader evaluating tools, I’d encourage you to stop asking only “how good is the model?” and start asking “what is the trust model, and does it provide the balance between agency and security that my team requires?”

If ambient agents are going to become digital colleagues, we don’t just need smarter models. We need boundaries we can live with.

This is the first part in a series on OpenClaw, AI agents, and the effect they will have on security postures and interaction design going forward.